Hunting a Windows ARM crash through Rust, C, and build-system configurations

A mimalloc 3.3 upgrade crashed Windows ARM64 CI with STATUS_ACCESS_VIOLATION. The trail ran through Rust build scripts, C atomics, crash dumps, and a subtle load-vs-CAS bug.

Sampo Kivistö

Founder & CEO

Hunting a Windows ARM crash through Rust, C, and build-system configurations

A routine allocator update turned into one of the most interesting debugging sessions I have had in a while.

Working on my Rust projects GitComet and AutoExplore, we use mimalloc for memory allocation. It has been a great fit for both projects and helped reduce memory usage noticeably, so updating it felt like routine maintenance.

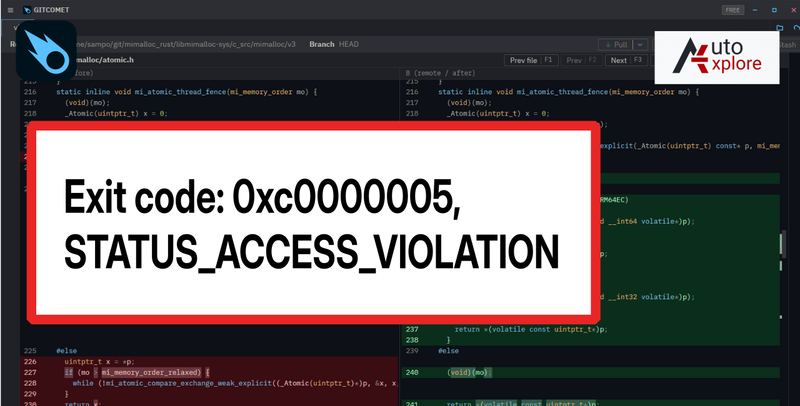

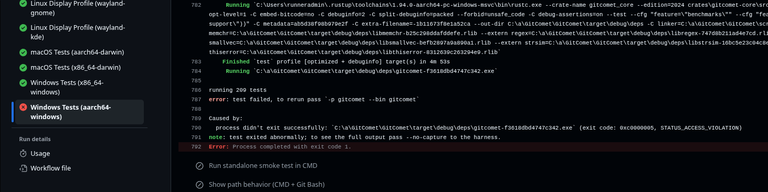

Upgrading to mimalloc 3.3 suddenly broke the Windows ARM64 CI pipeline. Linux passed. macOS passed. x86_64 Windows passed. Only aarch64-pc-windows-msvc failed, and it failed hard:

(exit code: 0xc0000005, STATUS_ACCESS_VIOLATION)

No stack trace. No helpful panic. No obvious clue.

That was the moment this stopped being a dependency update and turned into a bug hunt.

The kind of failure that gives you nothing

The only change in the pull request was the mimalloc upgrade, and the previous version did not produce the error.

That narrowed the problem down enough that I opened an issue in mimalloc, because the regression looked real. The maintainer suggested trying -DMI_WIN_INIT_USE_TLS_DLLMAIN=1, which was a good lead, but I hit another problem immediately: I was using mimalloc through the Rust crate mimalloc_rust, and I did not yet understand how to pass that kind of flag through the Rust build.

That part turned out to be unexpectedly educational.

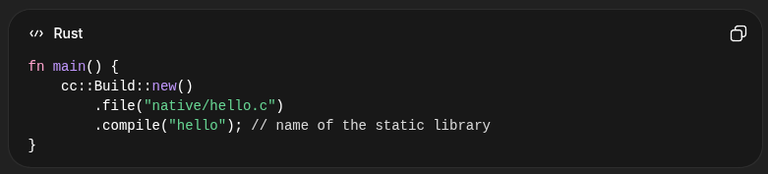

I had always treated mimalloc_rust like a normal Rust dependency, but under the hood it compiles a C codebase as part of the crate build. Looking through the sources, I found the cc crate, which is the standard bridge Rust uses to compile C, C++, assembly, and even CUDA during build.rs.

fn main() {

cc::Build::new()

.file("native/hello.c")

.compile("hello");

}

That is the basic shape: a Rust crate can look ordinary from Cargo's point of view while still compiling native code during the build.

First reaction: turn on more debugging

My first instinct was simple: compile mimalloc with more diagnostics and get a better failure.

I found that mimalloc exposes flags like MI_DEBUG and MI_SHOW_ERRORS through Cargo environment variables, so I turned those on and reran the failing job.

Same crash. Same exit code. Still no stack trace.

Normally that is where a bug like this stalls for a while.

Using CI as a remote debugger

Since I could not reproduce the problem locally, I tried to get the failing GitHub Actions runner to capture a dump at the moment of the crash.

I used ProcDump from a PowerShell script in CI to watch the test binary, catch the unhandled exception, and upload the resulting dump as an artifact. GPT-5.4 helped speed up that part by generating the right command-line plumbing for ProcDump and the artifact upload steps.

After a few iterations, I got the dump.

And finally, the failure had a shape:

This dump file has an exception of interest stored in it.

(290c.71c): Access violation - code c0000005

gitcomet_5e0245ab8a6fea4a!_InterlockedCompareExchange64+0xc

Attempt to write to address 00007ff743eb4b88

STACK_TEXT:

gitcomet_5e0245ab8a6fea4a!_InterlockedCompareExchange64+0xc

gitcomet_5e0245ab8a6fea4a!_mi_malloc_generic+0x44

gitcomet_5e0245ab8a6fea4a!_mi_theap_malloc_zero+0xd8

gitcomet_5e0245ab8a6fea4a!std::sys::pal::windows::to_u16s::inner+0x60

The most important clue was that the crash happened inside _InterlockedCompareExchange64, with an access violation on Windows ARM64. In other words, something that looked like a read path was going through an atomic compare-and-exchange operation and touching memory it was not allowed to modify.

That was the first moment the bug became exciting instead of frustrating.

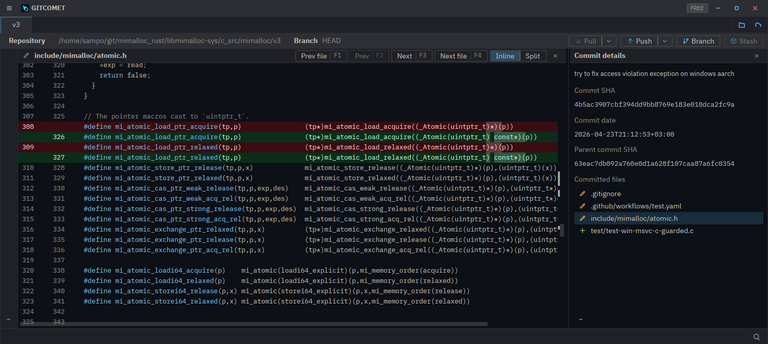

The bug was a load that was not really a load

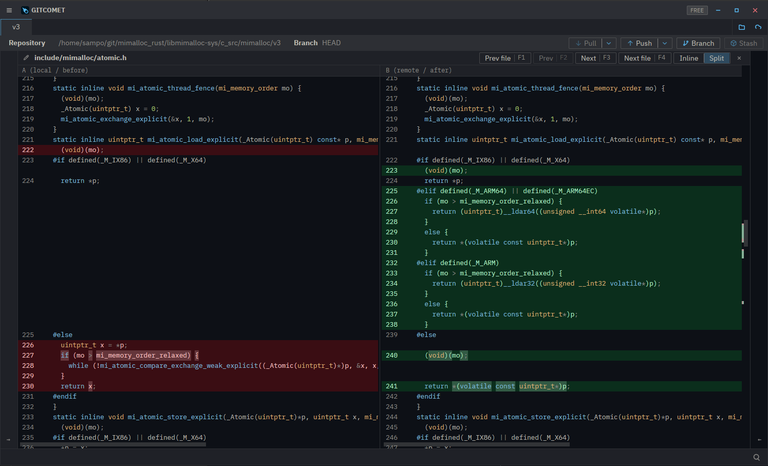

Once I had the dump, I started digging into mimalloc's atomic code. I am not a daily C or C++ engineer, so this meant slowing down and understanding what the code was actually trying to do.

In one code path, mimalloc was effectively using compare-and-exchange as if it were a load. That sounds harmless at first, because the code was comparing x and writing back the same x. But on ARM64, that is still not a plain read. It is a read-modify-write atomic operation.

That difference mattered because the pointer being accessed could point into sentinel objects like mi_theap_empty or mi_page_empty, which live in read-only memory.

- A code path wanted an ordered load.

- Instead of performing a true load, it used compare-and-exchange.

- On Windows ARM64, that became a write-capable atomic instruction.

- The target happened to be read-only sentinel storage.

- Windows raised

STATUS_ACCESS_VIOLATION.

That is the kind of bug that can stay invisible for a long time because it depends on three things lining up at once: a specific architecture, a specific compiler lowering, and a specific object layout.

There was also a const-correctness problem in the pointer load macros. The old macros cast away const, which made it easier for the type system to accept a path that could mutate memory even when the underlying storage should have been treated as read-only. Fixing that did not just make the code prettier. It made the contract clearer: loads should accept read-only storage, and load helpers should stay loads.

const instead of silently casting it away.The functional fix was even more important. The compare-and-exchange loop was removed from the load path and replaced with real ordered load operations on ARM, using the correct intrinsics for acquire semantics.

The old code was "reading by doing CAS," while the fixed code was "reading by doing an actual ordered load."

Once you see it that way, the crash makes perfect sense.

The real surprise was not just the bug, but how we built it

At that point I understood why the access violation happened, but why had this not been caught sooner?

The answer led back to the build system.

Upstream mimalloc was not being compiled the same way as the Rust wrapper in my project. On the MSVC and Windows ARM family of targets, upstream behavior was compiling the code as C++, while the Rust wrapper still compiled static.c as C through build.rs. In the C++ version, that particular atomic file was not included in the output, and the C++ path relied on its own atomics implementation.

So this was not just a low-level allocator bug. It was a mixture of:

- Rust and C

- Crate build scripts and upstream build logic

- Architecture-specific atomics and compiler behavior on Windows ARM64

That is exactly why it was so difficult to spot from the outside.

The workaround on our side was to compile mimalloc more like upstream does for Windows ARM and MSVC-family targets, including using the C++ compiler path there. I also raised the upstream issue and put together a PR on our side to address the Rust build differences.

Why I wanted to share this

I enjoyed this bug far more than I expected to.

It started with a useless exit code and ended up teaching me several things at once:

- how Rust crates can compile and ship C code under the hood

- how to capture crash dumps from CI even without direct access to the target device

- how small type-system lies around

constcan enable real platform bugs - how one architecture can expose assumptions that seem harmless elsewhere

- how build-system differences can be just as important as source-code differences

That is why this felt worth writing up. It was not just "I fixed a crash." It was a reminder that some of the best debugging sessions force you to understand the layers below the one you normally work in.

If you enjoy this kind of investigation, GitComet is the tool we are building for exactly this style of work: engineers chasing real regressions through code diffs, dependency updates, and cross-platform behavior.